Note

Click here to download the full example code

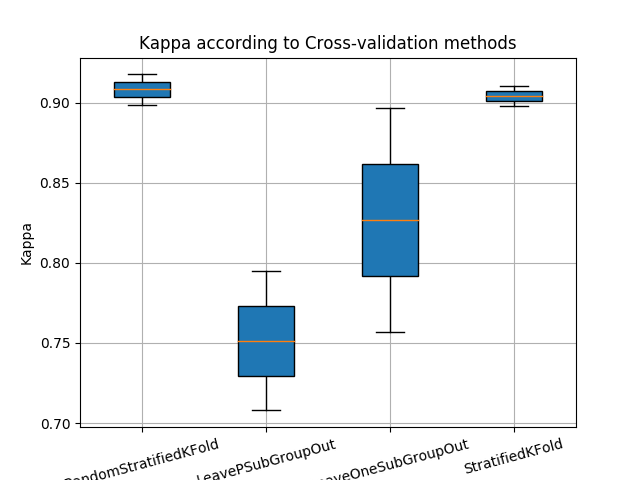

Learn with Random-Forest and compare Cross-Validation methods¶

This example shows how to make a classification with different cross-validation methods.

Import librairies¶

from museotoolbox.ai import SuperLearner

from museotoolbox import cross_validation

from museotoolbox.processing import extract_ROI

from museotoolbox import datasets

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import StratifiedKFold

Load HistoricalMap dataset¶

raster,vector = datasets.load_historical_data(low_res=True)

field = 'Class'

group = 'uniquefid'

X,y,g = extract_ROI(raster,vector,field,group)

Initialize Random-Forest¶

classifier = RandomForestClassifier(random_state=12,n_jobs=1)

Create list of different CV¶

CVs = [cross_validation.RandomStratifiedKFold(n_splits=2),

cross_validation.LeavePSubGroupOut(valid_size=0.5),

cross_validation.LeaveOneSubGroupOut(),

StratifiedKFold(n_splits=2,shuffle=True) #from sklearn

]

kappas=[]

for cv in CVs :

SL = SuperLearner( classifier=classifier,param_grid=dict(n_estimators=[50,100]),n_jobs=1)

SL.fit(X,y,group=g,cv=cv)

print('Kappa for '+str(type(cv).__name__))

cvKappa = []

for stats in SL.get_stats_from_cv(confusion_matrix=False,kappa=True):

print(stats['kappa'])

cvKappa.append(stats['kappa'])

kappas.append(cvKappa)

print(20*'=')

Out:

Kappa for RandomStratifiedKFold

0.9177889428851054

0.8989253671111543

====================

Kappa for LeavePSubGroupOut

0.7948119033434562

0.7078871023125154

====================

Kappa for LeaveOneSubGroupOut

0.8970485707645829

0.7571515766489976

====================

Kappa for StratifiedKFold

0.9105668061997964

0.8981956129176757

====================

Plot example

from matplotlib import pyplot as plt

plt.title('Kappa according to Cross-validation methods')

plt.boxplot(kappas,labels=[str(type(i).__name__) for i in CVs], patch_artist=True)

plt.grid()

plt.ylabel('Kappa')

plt.xticks(rotation=15)

plt.show()

Total running time of the script: ( 0 minutes 5.926 seconds)