Note

Click here to download the full example code

Learn algorithm and customize your input raster without writing it on disk¶

This example shows how to customize your raster (ndvi, smooth signal…) in the learning process to avoi generate a new raster.

Import librairies¶

from museotoolbox.ai import SuperLearner

from museotoolbox.processing import extract_ROI

from museotoolbox import datasets

from sklearn.ensemble import RandomForestClassifier

from sklearn import metrics

Load HistoricalMap dataset¶

raster,vector = datasets.load_historical_data(low_res=True)

field = 'Class'

Initialize Random-Forest and metrics¶

classifier = RandomForestClassifier(random_state=12,n_jobs=1)

kappa = metrics.make_scorer(metrics.cohen_kappa_score)

f1_mean = metrics.make_scorer(metrics.f1_score,average='micro')

scoring = dict(kappa=kappa,f1_mean=f1_mean,accuracy='accuracy')

Start learning¶

sklearn will compute different metrics, but will keep best results from kappa (refit=’kappa’)

SL = SuperLearner(classifier=classifier,param_grid=dict(n_estimators=[10]),n_jobs=1,verbose=1)

Create or use custom function

def reduceBands(X,bandToKeep=[0,2]):

# this function get the first and the last band

X=X[:,bandToKeep].reshape(-1,len(bandToKeep))

return X

# add this function to learnAndPredict class

SL.customize_array(reduceBands)

# if you learn from vector, refit according to the f1_mean

X,y = extract_ROI(raster,vector,field)

SL.fit(X,y,cv=2,scoring=scoring,refit='f1_mean')

Out:

Fitting 2 folds for each of 1 candidates, totalling 2 fits

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s finished

best score : 0.9187145557655955

best n_estimators : 10

Read the model¶

print(SL.model)

print(SL.model.cv_results_)

print(SL.model.best_score_)

Out:

GridSearchCV(cv=<museotoolbox.cross_validation.RandomStratifiedKFold object at 0x7f7fdf7d3690>,

estimator=RandomForestClassifier(n_jobs=1, random_state=12),

n_jobs=1, param_grid={'n_estimators': [10]}, refit='f1_mean',

scoring={'accuracy': 'accuracy',

'f1_mean': make_scorer(f1_score, average=micro),

'kappa': make_scorer(cohen_kappa_score)},

verbose=1)

{'mean_fit_time': array([0.02137256]), 'std_fit_time': array([0.00026202]), 'mean_score_time': array([0.00601017]), 'std_score_time': array([5.94854355e-05]), 'param_n_estimators': masked_array(data=[10],

mask=[False],

fill_value='?',

dtype=object), 'params': [{'n_estimators': 10}], 'split0_test_kappa': array([0.85692241]), 'split1_test_kappa': array([0.85752681]), 'mean_test_kappa': array([0.85722461]), 'std_test_kappa': array([0.0003022]), 'rank_test_kappa': array([1], dtype=int32), 'split0_test_f1_mean': array([0.91871456]), 'split1_test_f1_mean': array([0.91871456]), 'mean_test_f1_mean': array([0.91871456]), 'std_test_f1_mean': array([0.]), 'rank_test_f1_mean': array([1], dtype=int32), 'split0_test_accuracy': array([0.91871456]), 'split1_test_accuracy': array([0.91871456]), 'mean_test_accuracy': array([0.91871456]), 'std_test_accuracy': array([0.]), 'rank_test_accuracy': array([1], dtype=int32)}

0.9187145557655955

Get F1 for every class from best params¶

for stats in SL.get_stats_from_cv(confusion_matrix=False,F1=True):

print(stats['F1'])

Out:

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s finished

[0.94966269 0.77289377 0.99649123 0.74418605 0. ]

[0.94994786 0.77697842 0.99647887 0.73282443 0. ]

Get each confusion matrix from folds¶

for stats in SL.get_stats_from_cv(confusion_matrix=True):

print(stats['confusion_matrix'])

Out:

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s finished

[[915 30 0 1 1]

[ 64 211 0 14 0]

[ 0 0 284 0 0]

[ 0 16 2 48 0]

[ 1 0 0 0 0]]

[[911 34 0 2 0]

[ 59 216 0 14 0]

[ 0 0 283 1 0]

[ 0 17 1 48 0]

[ 1 0 0 0 0]]

Save each confusion matrix from folds¶

SL.save_cm_from_cv('/tmp/testMTB/',prefix='RS50_')

Out:

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s finished

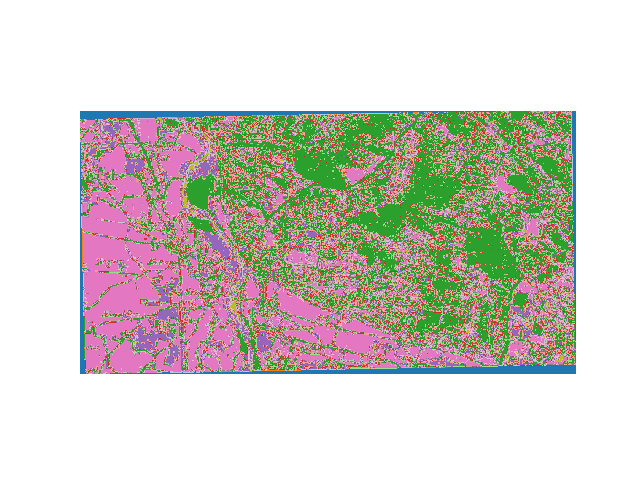

Predict map¶

SL.predict_image(raster,'/tmp/classification.tif',

higher_confidence='/tmp/confidence.tif',

confidence_per_class='/tmp/confidencePerClass.tif')

Out:

Total number of blocks : 6

Detected 1 band for function predict_array.

No data is set to : 0.

Detected 5 bands for function predict_confidence_per_class.

No data is set to : -32768.

Detected 1 band for function predict_higher_confidence.

No data is set to : -32768.

Batch processing (6 blocks using 23Mo of ram)

Prediction... [##......................................]5%

Prediction... [####....................................]11%

Prediction... [######..................................]16%

Prediction... [########................................]22%

Prediction... [###########.............................]27%

Prediction... [#############...........................]33%

Prediction... [###############.........................]38%

Prediction... [#################.......................]44%

Prediction... [####################....................]50%

Prediction... [######################..................]55%

Prediction... [########################................]61%

Prediction... [##########################..............]66%

Prediction... [############################............]72%

Prediction... [###############################.........]77%

Prediction... [#################################.......]83%

Prediction... [###################################.....]88%

Prediction... [#####################################...]94%

Prediction... [#######################################.]99%

Prediction... [########################################]100%

Plot example

from matplotlib import pyplot as plt

from osgeo import gdal

src=gdal.Open('/tmp/classification.tif')

plt.imshow(src.GetRasterBand(1).ReadAsArray(),cmap=plt.get_cmap('tab20'))

plt.axis('off')

plt.show()

Total running time of the script: ( 0 minutes 1.092 seconds)